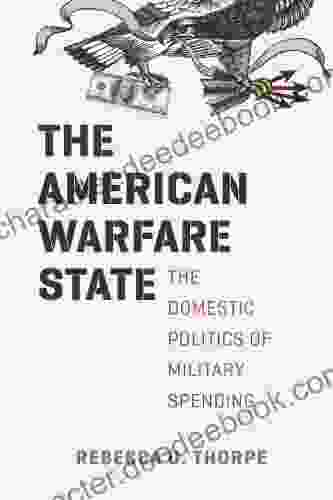

Unveiling the American Warfare State: A Comprehensive Examination of Its Roots, Evolution, and Impact

The American warfare state is a term used to describe the dominant role that the United States military and its associated industries play in American society and politics. It is a complex and...

Noah Blair6 min read

Noah Blair6 min read